When it comes to displaying the progress of a long-running operation to the user, there are many options available to a GUI designer:

- Change the cursor to an hourglass

- Change the caption on the status bar

- Show an animated glyph on the window

- Show a dialog box

A number of factors could affect which method you choose:

- How long does the operation typically run for? A few seconds? Minutes?

- Is the operation synchronous or asynchronous? If the operation is asynchronous, are there any restrictions on what the user can do while the operation is running?

- Can the user cancel the operation?

- Is the length of the operation known, or can it be easily estimated? Is it unpredictable?

- Does the operation provide meaningful feedback to the user? (e.g. status messages)

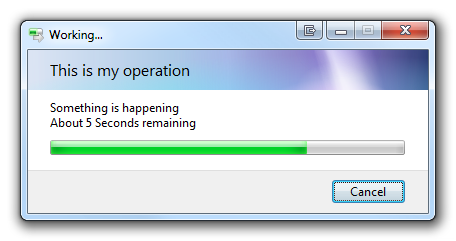

Shell Progress Dialogs provide a mechanism for displaying the progress of a long-running operation. They are suited to non-trivial operations that exceed 10 seconds, showing status messages and a progress bar. They facilitate both synchronous and asynchronous operations (though the latter are always preferable). They provide a mechanism for aborting the operation and for estimating the time remaining to complete the operation (computed using the progress percentage and time measurements taken as the operation progresses). If progress is not measurable (and the user is running Windows Vista or above), a marquee can be displayed instead of a normal progress bar.

This type of dialog box is part of the Windows shell, and is used by the operating system for a variety of purposes, from copying files to running the Windows Experience Index tests. Microsoft makes the dialog available to third party code via COM; exposing the IProgressDialog interface and a concrete implementation of the type (CLSID_ProgressDialog). This makes it easy to use progress dialogs in C/C++ applications, but what about managed code and .NET?

Instantiating COM Types in .NET

As with COM interfaces in general, the .NET Framework has a number of features to enable interoperability. In general, to instantiate a COM type in .NET, you need to:

- Define the interface implemented by the type (in this case, IProgressDialog), based on its specification. Add any required metadata for marshalling method parameters and return types, as well as any enumerations required. Above all, remember to add the [ComImport] and [Guid] attributes to the interface definition.

- Obtain a managed Type object for the COM type (not the interface), using the Type.GetTypeFromCLSID() method.

- Use Activator.CreateInstance() on the Type to instantiate it. Cast the resulting object to the interface type, and you’re ready to start calling methods.

- Don’t forget to call Marshal.FinalReleaseComObject() on the instance when you’re finished with it. A convenient way to ensure this is to place the cleanup code in a finally block, or implement IDisposable if the object is stored at instance scope in a class.

For IProgressDialog, the CLSID of the interface is {EBBC7C04-315E-11d2-B62F-006097DF5BD4} and the CLSID of the concrete class is {F8383852-FCD3-11d1-A6B9-006097DF5BD4}.

Using IProgressDialog

The MSDN Documentation for the Windows Shell API details the lifecycle of the IProgressDialog object and how to use it. In simple terms:

- Set up the progress dialog before it is displayed to the user by calling SetTitle() and SetCancelMsg().

- Show the dialog using StartProgressDialog(). A series of PROGDLG flags control the behaviour and appearance of the dialog box.

- Show status messages using the SetLine() method. You can use up to 3 lines of text, 2 if you allow the estimated time remaining to be automatically calculated.

- As you perform work, call SetProgress() to update the progress bar. Each call lets you specify the current value as well as the maximum value for the bar. The user can click the cancel button at any time, therefore you should also check its status using the HasUserCancelled() method.

- When the operation has finished, call StopProgressDialog(). Note that, once you close the dialog box, you cannot show it again; you must create a new instance for each operation.

Type progressDialogType = Type.GetTypeFromCLSID(new Guid("{F8383852-FCD3-11d1-A6B9-006097DF5BD4}"));

IProgressDialog progressDialog = (IProgressDialog)Activator.CreateInstance(progressDialogType);

try {

// set up dialog and display to the user

progressDialog.SetTitle("Progress dialog");

progressDialog.SetCancelMsg("Aborting...", null);

progressDialog.StartProgressDialog(Form.ActiveForm.Handle, null, PROGDLG.AutoTime, IntPtr.Zero);

// do work

progressDialog.SetLine(1, "Working...", false, IntPtr.Zero);

progressDialog.SetLine(2, "Please wait while the operation completes", false, IntPtr.Zero);

for (uint i = 0; i < 100; i++) {

if (progressDialog.HasUserCancelled()) {

break;

}

else {

progressDialog.SetProgress(i, 100);

Application.DoEvents();

Thread.Sleep(150);

}

}

// close dialog

progressDialog.StopProgressDialog();

}

finally {

Marshal.FinalReleaseComObject(progressDialog);

progressDialog = null;

}

A Managed Wrapper for IProgressDialog

Working directly with COM types is not recommended; due in part to the need to manually release resources, as well as having to work with the archaic programming model, marshalled types, structures, etc. COM types are also limited in comparison to .NET types, in that they do not (natively) support events or properties. For these reasons, I decided to create a managed wrapper for IProgressDialog.

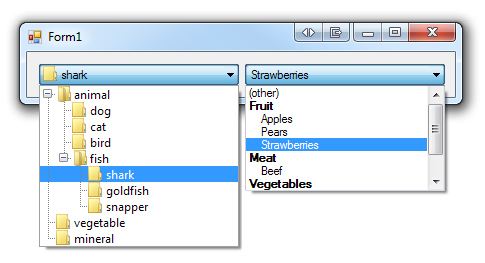

As with other common dialog types already available in .NET (e.g. OpenFileDialog), my implementation inherits from the Component class, allowing it to be dropped onto the design surface of a Form or other component in Visual Studio. Component also has the advantage of implementing the IDisposable pattern, removing the need to write some boilerplate code.

My goals for the wrapper were to:

- Replace method calls with properties (where possible).

- Provide get accessors to complete the functionality of each property.

- Replace the flag options with separate properties (to improve ease of use and intuitiveness).

- Provide sensible default values for properties.

- Simplify the operations by removing parameters and relying more on the object state.

- Provide cleanup code in a Dispose() method.

- Remove the need to re-instantiate the component for each operation.

- Further simplify the use of the component by automatically closing the dialog when the progress reaches 100%.

With my wrapper class, ProgressDialog, you can configure the dialog at design time and thus write less code:

// set up dialog and display to the user

wrapper.Show();

// do work

wrapper.Line1 = "Working...";

wrapper.Line2 = "Please wait while the operation completes";

for (uint i = 0; i < 100; i++) {

if (wrapper.HasUserCancelled) {

break;

}

else {

wrapper.Value = i;

Application.DoEvents();

Thread.Sleep(150);

}

}

// close dialog

wrapper.Close();

Final Words

As I said in my introduction, there are many different ways to show progress in a GUI application. The real advantage of Shell Progress Dialogs is that they are rendered by the operating system (thus their appearance is ‘upgraded’ automatically on new versions of Windows, and they always fit the OS theme), and present users with a familiar and consistent interface. They’re not suitable for all applications, but I hope you find my implementation useful if you think they suit the needs of your app.

Download

ProgressDialogs.zip (9KB, includes example)